"Are we there yet?"

"Are we there yet?"

The age old question evokes images of family vacations that involve long drives with kids in the station wagon packed together like sardines. You keep telling yourself that it's all about the journey—when you really think about it, your target is the destination and you just want to get there. Shortcuts, your cell phone and Google Maps are your friends. Toll booths and traffic jams are not.

What is Latency?

Latency is defined as the delay between when a data packet was transmitted and when it was received.

More specifically, latency is the average delay seen over a period of time.

If there are any differences in delay, this is tracked as jitter (see the blog article, Where Does VoIP Jitter Come From).

In a perfect world, packets transmitted would arrive just a few milliseconds later at their destination no matter how far the receiver is located from the sender, or how many devices were involved in the communications path. In the real world, there are many delays that occur on networks that can prevent packets from arriving quickly.

How Is Latency Measured?

Latency on networks is typically measured in milliseconds (ms). In many cases, latency is reported as a round-trip measurement. This is due to the fact that there is no way to accurately measure single-direction latency unless both transmitter and receiver have very precise clocks.

| Note: | Network Time Protocol or NTP (RFC 5905) is not precise enough to permit uni-directional latency measurement.

Precision Time Protocol or PTP (IEEE 1588) is precise enough, but this is not typically implemented on most networks. |

If latency is reported as a round-trip measurement, consideration should be made if the numeric value reported is the entire round-trip measurement, or if this measurement is divided by two to attempt to display a uni-directional measurement.

What Causes Latency?

There are many reasons that packets can be delayed as they move towards their destination:

- Physical Distance: Packets going across town will have a much lower latency than going across the country. One would tend to think that a bit on a link would instantaneously be reflected at the far end, but the speed of light has its limitations (186,000 miles per second), and this affects network data transmissions as well.

- Misconfigured QoS: If QoS is misconfigured, it can add latency as the wrong packets may be getting delayed in a buffer when other traffic is present.

- Bandwidth Constrained Links: If a link always has high utilization, then packets must be buffered on the interface until the link has available bandwidth to transmit everything. If this is a common occurrence on an interface, then latency will always be incurred.

- Too Many Network Devices: Each network device involved in a communications path will add a little bit of latency to the conversation. For example, if there are 30 different network devices involved and each one adds 2ms of latency due to their own internal processing limitations (backplane limitations, CPU limitations, buffering) then 60ms of latency will be incurred from just the devices along the path.

- Serialization Delay: If you have a slow link that is not overutilized, there is a delay incurred just to get the packets onto the wire. Imagine a situation where you are transmitting a 300byte packet over an 1200bps link. 300 bytes = 2400bits, so it would take one second just to get the bits onto the wire.

|

Note: |

The transmission medium selected (fiber optic cable or copper ethernet or wireless) generally does not make a difference in latency, as the velocity of propagation (VoP) is roughly the same:

|

What Does High Latency Sound Like?

VoIP over high latency links may not have any quality problems, but it creates awkward communications involving interruptions, talking on top of someone else, and strange silences because you don't know if the other person has stopped talking yet.

What Is Acceptable Latency?

One-way latency for VoIP/UC should be below 125ms (250ms round-trip). Users will tend to notice higher latencies.

| Note: | The highest latency environment for voice communications was the Apollo moon landing back in the late '60s. The distance involved meant that all speech was delayed about 1.25seconds. This equates to 1250ms! |

How Do You Detect Latency?

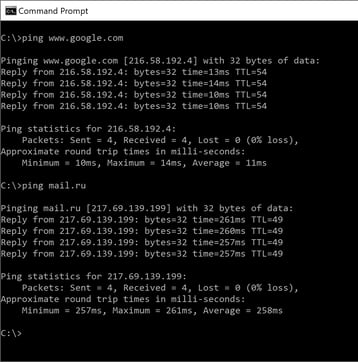

Latency is typically detected via pinging the remote IP address and noting the resulting time.

This example shows the time required to ping www.google.com from my location as ranging from 10ms to 14ms.

This example shows the time required to ping www.google.com from my location as ranging from 10ms to 14ms.

Uni-direction latency for this may be 5ms to 7ms.

The time required to ping mail.ru from my location ranges from 257ms to 261ms.

Uni-directional latency for this may be 128ms to 130ms.

While ping is a simple indication, it may not take into account QoS or packet size queueing that may be enabled for different applications along the path. In this case, it is recommended to use a call simulator that will properly emulate the RTP footprint on the network and report the correct latency seen by that application with that QoS marking.

How Do You Solve Latency Problems?

Reducing latency should be the goal of both network and telecom teams. Here are some considerations that will help to reduce latency:

- Fix Suboptimal Route Paths: If packets going from San Francisco to Los Angeles need to be routed through headquarters in Atlanta due to the way the MPLS is designed as a hub-and-spoke network, it makes two unnecessary cross-country trips to reach its destination. Consideration should be given to create a full-mesh network where San Francisco can communicate directly with Los Angeles.

- Replace Slow Network Equipment: If the company's headquarters location has a small firewall that is supporting 20 VPN tunnels, it may be over-burdened since it has to do all of the encryption for the VPN as well as filtering for Internet traffic. This device may add significant latency to all conversations due to its workload.

- Upgrade Slow Links: If a link is 10megs or slower, it may be adding latency even if the connection is not heavily utilized.

- Eliminate Bandwidth Limitations: If a link in your network is constantly saturated, it will add latency even if it is running at 50Gbps because packets will still have to be buffered before being transmitted.

- Fix Incorrectly Configured QoS: If a QoS is misconfigured and is queueing high-priority VoIP RTP packets in a low priority buffer, it will be stuck waiting with all of the data packets before being transmitted.

Review our white paper or contact us with questions about how PathSolutions can solve call quality and many other VoIP issues.